AI Backlash Is Coming for Elections: 2026 Guide

The conversation around AI in elections has shifted from novelty to scrutiny. A recent Verge report, AI backlash is coming for elections , reflects a broader market reality in 2026: audiences are no longer impressed by synthetic polish

The conversation around AI in elections has shifted from novelty to scrutiny. A recent Verge report, AI backlash is coming for elections, reflects a broader market reality in 2026: audiences are no longer impressed by synthetic polish alone. They want proof, context, and accountability.

For brands, agencies, and creators, that shift matters well beyond politics. Election-season skepticism tends to spill into everyday feeds, affecting how people respond to AI-generated graphics, voiceovers, captions, and claims. If your social media marketing strategy depends on speed and automation, it now has to include trust signals as a core requirement, not an afterthought.

Key takeaway: Election-era AI backlash changes how brands should test a social media marketing strategy, because trust and clarity now affect reach as much as creative quality.

What changed in the election conversation around AI

AI content used to be judged mostly on whether it looked convincing. In 2026, the conversation is more complicated. Voters, journalists, and platform users have seen enough manipulated media to ask a different question: who made this, why was it made, and can it be verified?

The Verge piece captures this shift through the lens of elections, where AI is increasingly tied to misinformation concerns, labor debates, and public distrust. That matters for social distribution because election coverage often sets the tone for what people accept elsewhere online. When a political deepfake or synthetic ad campaign becomes a headline, audiences become more cautious about all AI-driven content.

This is why a strong social media marketing strategy can no longer treat AI as only an efficiency tool. It also needs to answer reputational questions. If content is automated, disclose it when appropriate. If claims are controversial, support them with accessible sources. If visuals are synthetic, avoid ambiguity that could make the audience feel misled.

For practical guidance on producing discoverable, helpful content, Google’s SEO Starter Guide remains a useful baseline. It reinforces a principle that applies here too: publish content that serves people first, not content that merely performs for algorithms.

Why election backlash affects everyday social distribution

Election backlash does not stay inside political campaigns. It changes platform behavior, audience expectations, and moderation sensitivity across categories. That has several downstream effects for social marketers:

- Users become more skeptical of polished visuals, especially when they feel mass-produced.

- Comment sections fill with authenticity checks, forcing brands to defend the source of their content.

- Platforms tighten enforcement around misleading media and manipulated claims.

- Creators who rely on AI-heavy outputs may see lower retention if audiences feel the brand voice is generic.

In other words, the problem is not AI itself. The problem is unearned confidence. A social media marketing strategy that uses AI to publish faster but fails to preserve identity, transparency, and relevance will struggle in an environment where people are already primed to question what they see.

This is especially relevant for short-form video, image carousels, and fast-turn commentary posts. If the audience senses that a message is optimized only for output volume, not for value, engagement tends to drop. Brands with stronger editorial judgment will outperform those that automate everything.

How to adapt your social media marketing strategy in 2026

There are two ways to respond to AI backlash: stop using AI, or use it more carefully. The second option is the only sustainable one. A modern social media marketing strategy should combine efficiency with visible human oversight.

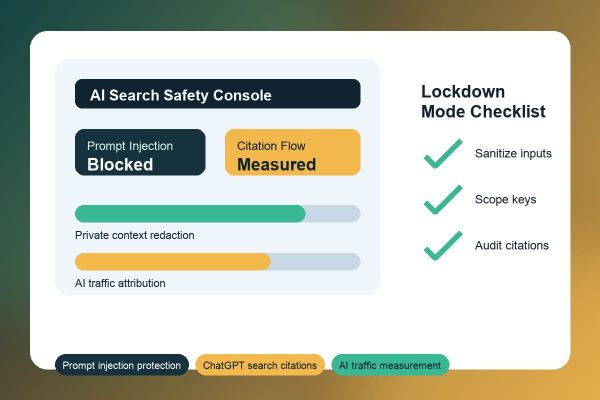

Start by defining which tasks AI can support and which tasks must stay human. AI can help with ideation, caption variants, content clustering, and first-draft repurposing. Human editors should own positioning, factual review, brand tone, and final approval. That separation reduces risk without sacrificing speed.

- Audit your current content mix for over-automation.

- Label or disclose synthetic assets when transparency matters.

- Use human review on sensitive topics, especially anything that could be mistaken for news or advocacy.

- Create a visible brand style that does not feel interchangeable with every other AI-assisted account.

- Measure trust indicators, not only clicks and impressions.

If you need operational support, Crescitaly’s services page is a useful reference point for structuring execution around growth, while the SMM panel is relevant when you need distribution workflows that are easier to manage at scale. The point is not to replace strategy with tools; it is to make tools serve a better strategy.

Build trust into the workflow, not just the caption

Trust is built before publish time. A useful process includes source checks, creative review, and a policy for what counts as “too synthetic” for a given audience. For example, a product teaser can tolerate a stylized AI image more easily than a sensitive public-affairs message.

One practical rule: if a post contains a claim, visual, or voice element that could trigger confusion, add context immediately. That can be a note in the caption, a source link, or a visible sign that the post is a concept render rather than real-world footage.

Content formats that survive skepticism

Not every format is equally vulnerable to AI backlash. Some content types are easier to defend because they are naturally contextual and human-led. The strongest formats for a trust-aware social media marketing strategy are those that make the brand’s reasoning visible.

- Annotated carousels that explain the source of a claim or a trend.

- Founder-led short videos that show the person behind the brand.

- Screen recordings and walkthroughs that demonstrate a process instead of pretending to be a spontaneous moment.

- Case studies that include real numbers, real constraints, and real lessons learned.

- Live Q&A posts where audience questions are answered in plain language.

These formats do not eliminate the need for AI. They simply reduce the risk that automation will flatten the message. If you are working in a competitive niche, clarity beats spectacle more often than most teams expect. This is where content quality and distribution discipline start to merge.

For platform-specific presentation standards, YouTube’s disclosure guidance is useful even outside video. It reinforces the broader expectation that synthetic or altered material should not mislead viewers about what is real.

Mistakes that trigger distrust and lower reach

Several common mistakes can make election-era skepticism work against your brand. They are not dramatic errors. They are small choices that send the wrong signal repeatedly.

- Publishing AI-generated images without context when the image could be mistaken for evidence.

- Using generic AI copy that repeats the same tone across every channel.

- Posting fast before fact-checking, especially on news-adjacent topics.

- Letting one-person automation handle both creation and approval.

- Chasing engagement with provocative framing that sounds manipulative.

The easiest mistake to miss is sameness. When a feed starts to feel like a template factory, audiences disengage even if the content is technically correct. A strong social media marketing strategy keeps enough editorial variation to sound alive. That means different hooks, real examples, specific numbers, and a voice that reflects actual experience.

Another mistake is assuming transparency will hurt performance. In many cases, the opposite is true. Clear context can reduce friction, lower comment hostility, and improve the odds that users trust the post enough to share it.

How to measure performance when trust is part of the KPI set

If you are still optimizing only for reach, you are missing the signal that matters most in this environment. Election backlash makes it important to add trust-related indicators to your review process. These do not replace traditional metrics; they complement them.

Track indicators such as saves, qualified comments, repeat viewers, source-link clicks, and sentiment in replies. Compare the performance of highly automated posts with more human-led posts. Look for patterns in negative feedback. If people keep asking whether your content is real, the issue is not the audience. It is the presentation.

It is also worth segmenting performance by content type. For instance, informational posts may benefit from more explicit sourcing, while entertainment content may need clearer visual identity. The point is to make your social media marketing strategy adaptable enough to handle different trust thresholds across the same account.

One operational advantage of a service-backed workflow is consistency. If your team uses internal systems or external support to maintain publishing cadence, you can spend more time on verification and audience insight instead of scrambling to produce every asset from scratch. That is where operational maturity becomes a competitive edge.

If you are ready to tighten execution, review Crescitaly’s services and see how distribution support can fit into a more disciplined content system. When speed matters, the right infrastructure helps you keep quality high.

Sources

The following sources are useful for understanding how AI scrutiny, search quality, and disclosure expectations shape modern publishing:

- The Verge: AI backlash is coming for elections

- Google Search Central: SEO Starter Guide

- YouTube Help: altered or synthetic content disclosure

Related Resources

For execution support and practical workflow ideas, these Crescitaly resources may help:

If your team needs faster execution without losing control of quality, explore SMM panel services as part of a broader publishing system. The best results come when distribution tools support a clear editorial standard rather than replace it.

Share this article

Share on X · Share on LinkedIn · Share on Facebook · Send on WhatsApp · Send on Telegram · Email

FAQ

Why does election backlash matter for non-political brands?

Election backlash changes the way people evaluate authenticity, especially when content looks synthetic or highly polished. Those expectations often carry over to commercial feeds, making audiences more skeptical of AI-generated posts. Brands that respond with more context and transparency usually maintain stronger engagement.

Should brands stop using AI in their content workflows?

No. The better approach is to use AI selectively and keep human review in place for claims, tone, and sensitive visuals. AI can still improve speed and consistency, but it should not be the final authority on messaging. That balance protects trust without slowing production too much.

How can I tell if my social media marketing strategy is too automated?

Look for repetitive captions, generic visuals, weak brand voice, and audience comments asking whether the content is real. If most posts feel interchangeable, the workflow may be over-automated. A healthier strategy uses automation for support, not for identity.

What kinds of posts are most vulnerable to AI skepticism?

Posts that look like evidence, news, or first-hand documentation are the most vulnerable. That includes political visuals, event coverage, before-and-after claims, and testimonial-style content. If there is any risk of confusion, add context or use a more clearly human-led format.

What metrics should I watch besides likes and reach?

Track saves, shares, repeat views, comments with substantive questions, and source-link clicks. These signals show whether the audience trusts the content enough to act on it. Negative sentiment and repeated authenticity questions are also useful early warnings.

How do disclosures help performance?

Disclosures reduce ambiguity, which can lower backlash and improve trust. In many cases, audiences respond better when they know what they are seeing and why it was created. Clear context can protect performance by preventing confusion from becoming the main story.