AI Backlash Is Coming for Elections: 2026 Guide

AI is changing how political and civic content moves through social platforms, and the backlash is becoming part of the distribution story. The Verge’s reporting on AI, elections, data centers, and jobs points to a wider shift: audiences

AI is changing how political and civic content moves through social platforms, and the backlash is becoming part of the distribution story. The Verge’s reporting on AI, elections, data centers, and jobs points to a wider shift: audiences are getting more skeptical of synthetic content, platform policies are tightening, and people are asking for clearer proof of what they see online.

For marketers, that does not mean avoiding automation altogether. It means building a social distribution system that can survive lower trust, higher scrutiny, and faster moderation. If your brand depends on organic reach, creator partnerships, or local audience engagement, the election-year environment is a useful stress test for your social media marketing strategy.

Key takeaway: the safest social media marketing strategy in 2026 is one that uses AI for speed, but relies on human proof, transparent sourcing, and audience-first messaging for trust.

What changed in the election content environment

The current backlash is not just about whether AI can generate realistic images or convincing captions. It is about how people interpret intent. During elections, audiences are especially sensitive to manipulation, impersonation, and misleading context, so even legitimate AI-assisted content can trigger distrust if it feels synthetic or evasive.

That matters beyond politics. Election cycles often become the testing ground for broader platform rules, ad review policies, and user expectations. When people learn to question AI-generated political posts, they become more cautious toward brand content too. That has direct implications for any social media marketing strategy that depends on attention, credibility, and repeat engagement.

The Verge’s reporting highlights a broader tension: AI is being sold as a productivity layer, but public backlash grows when the technology appears to replace judgment rather than support it. That is especially relevant for campaign-style content, rapid response posts, and high-volume publishing calendars.

Why AI backlash matters to brands and creators

When audiences see political misinformation, deepfakes, or overly polished synthetic assets, they do not compartmentalize that suspicion. They carry it into the rest of their feed. Brands that overuse AI without editorial control risk sounding generic, opportunistic, or evasive even when the content is harmless.

Creators face a parallel problem. Their value has always rested on perceived authenticity, and election periods increase the premium on visible expertise, personal voice, and on-the-ground experience. A creator-led campaign can still perform well, but only if the audience believes the message came from a real person with a real point of view.

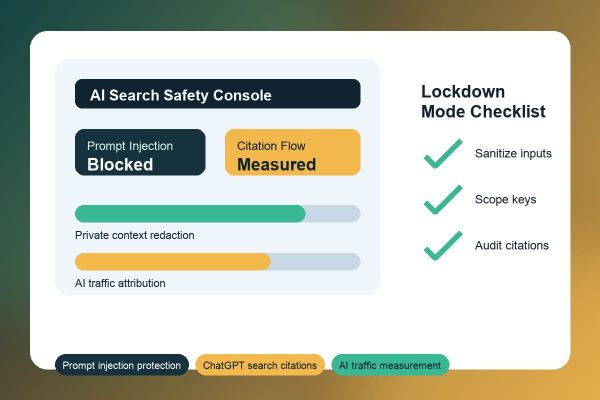

For social teams, the lesson is simple: AI can accelerate production, but it cannot replace a trust architecture. That trust architecture includes:

- clear authorship and accountability

- human review before publication

- visible citations for factual claims

- consistent tone across channels

- fast corrections when something is wrong

When these pieces are in place, AI becomes a utility. When they are absent, AI becomes a liability.

How to adjust your social media marketing strategy

If your publishing calendar includes civic topics, public policy themes, or election-adjacent commentary, your social media marketing strategy should be more conservative in execution and more disciplined in verification. The goal is not to post less; it is to post with more evidence and less ambiguity.

Use this sequence to audit your process:

- Map the content types that are most likely to trigger distrust, such as commentary, comparisons, or “breaking news” style posts.

- Identify where AI is used in your workflow: ideation, drafting, design, translation, captioning, or scheduling.

- Apply human review to anything that could be read as factual, persuasive, or politically sensitive.

- Standardize source links, bylines, and disclosure language when AI meaningfully shapes the output.

- Measure saves, shares, replies, and negative feedback separately so you can spot trust erosion early.

For brand accounts, the best use of AI is usually in the background: summarizing research, generating variant hooks, repurposing long-form content, or identifying posting windows. The visible post should still sound like your brand, not a machine optimizing for engagement at all costs.

If you are managing multiple client accounts, a structured workflow helps. You can use a central operations layer such as an SMM panel services setup to coordinate publishing, but the editorial judgment still has to come from your team. Distribution scale without trust discipline is a short-term gain and a long-term drag.

Content formats that still earn trust

Not every format reacts to AI backlash the same way. Some formats are naturally more credible because they show process, context, or proof. Those formats should carry more of your 2026 publishing mix.

Best-performing trust-first formats

Short explainers, behind-the-scenes walkthroughs, original screenshots, and founder or expert commentary all perform well because they reduce ambiguity. They also give audiences cues that the content is anchored in human experience, not just generated language.

Examples include:

- before-and-after case studies with source notes

- screen-recorded tutorials with voiceover

- photo posts that show real product use

- threaded explanations that cite official references

- community Q&A posts that invite direct feedback

When in doubt, choose formats that can be verified by the viewer. If a post makes a claim, include evidence. If a post makes a recommendation, explain the criteria. If a post uses AI to speed production, say so where appropriate and make sure the final result still reflects your brand standards.

This is also where platform guidance matters. YouTube’s policies on synthetic and altered content are a good reminder that platforms increasingly expect disclosure and context when AI meaningfully changes what users see. Review the current rules in the YouTube help center and apply the same thinking to your social assets.

Common mistakes to avoid in 2026

The biggest mistake is assuming AI backlash is only a political issue. In practice, it affects normal brand content whenever a post feels too polished, too fast, or too detached from reality. That can hurt performance even if the content is technically accurate.

Avoid these pitfalls:

- publishing AI-generated visuals without editorial review

- using the same synthetic tone across every platform

- writing captions that claim authority without evidence

- ignoring comment sentiment after a controversial post

- treating engagement metrics as proof of trust

Another mistake is overcorrecting by removing automation from the workflow entirely. That creates unnecessary friction. Better teams build guardrails: AI can help generate first drafts, summarize campaign performance, or localize content, but humans decide framing, timing, and factual risk. That balance is what keeps a social media marketing strategy both scalable and defensible.

Historical benchmark note: during earlier election cycles, many teams learned that speed alone does not win audience trust. In 2026, that lesson is more relevant because users are more alert to manipulation and more familiar with synthetic media.

What this means for brands, agencies, and publishers

Brands should expect more pressure to prove authenticity, especially when posting in sensitive news environments. Agencies need stronger QA processes, clearer approval chains, and a better way to document sources. Publishers and media-adjacent brands should be especially careful, because their audience already expects editorial rigor.

A practical workflow for 2026 looks like this:

- Draft with AI when speed matters.

- Fact-check every substantive claim against a primary source.

- Rewrite the opening to sound human and specific.

- Add visible context for any visual asset that may look synthetic.

- Publish, monitor, and respond quickly if audience trust signals dip.

If your team operates multiple profiles or client pages, pairing operational tools with human review can save time. You can see how Crescitaly structures execution through its services page, then decide where automation should stop and editorial control should begin.

The real strategic shift is not anti-AI. It is pro-accountability. That framing will matter more as elections intensify scrutiny on political speech, civic information, and any brand content that resembles persuasion at scale.

Share this article

Share on X · Share on LinkedIn · Share on Facebook · Send on WhatsApp · Send on Telegram · Email

FAQ

Why is AI backlash increasing during elections?

Election periods heighten concern about misinformation, impersonation, and manipulative media. As audiences see more synthetic content, they become more cautious about anything that looks automated or lacks clear sourcing.

Should brands stop using AI in their social media marketing strategy?

No. AI still helps with drafting, summarizing, and scaling repetitive work. The better approach is to keep AI in support roles and apply human judgment to facts, tone, and publication decisions.

How can a brand make AI-assisted posts feel more trustworthy?

Use real examples, cite reliable sources, and keep the voice specific to the brand. Avoid generic claims and make sure the final post reflects a clear point of view rather than a machine-generated summary.

Do election-related platform rules affect non-political brands?

Indirectly, yes. Policies created for political content often shape moderation standards, disclosure expectations, and user behavior across the platform, which can influence how all brand content is received.

What metrics show that trust is weakening?

Watch for declining saves, more negative comments, lower completion rates, and reduced repeat engagement. A post can still get impressions while silently damaging audience confidence.

Is an SMM panel still useful in a trust-sensitive environment?

Yes, if it is used for controlled distribution and workflow efficiency rather than artificial credibility. The key is to pair operational scale with transparent editorial standards and careful content review.

Sources

Primary reporting referenced in this article: The Verge: AI backlash is coming for elections.

Additional authoritative references: Google Search Central SEO Starter Guide and YouTube synthetic and altered content policy guidance.

Related Resources

For workflow support, review Crescitaly services to understand how execution layers can support editorial operations without replacing judgment.

If you need a distribution toolset, explore Crescitaly SMM panel for scalable social management that can be aligned with a human-led review process.